On some days we shudder in concern of AI and on others we’re compelled to roll our eyes at issues like the Meta AI device controversy—the AI archon has identified modern society to be a difficult opponent. Not for the to start with time, we have been confronted with some of the present constraints of AI, and it puts the progress we’ve produced with AI into standpoint. Troubles with Meta’s AI graphic generator have sparked a new spherical of discussion over AI’s struggles with range and conceptualizing the a lot more nuanced discrepancies among what a user would like to see and what these resources realize of it. The information of the Meta AI’s struggles with interracial partners is something we’ve observed a variation of with the Google Gemini AI, which tells us that the trouble extends beyond a enterprise-centric challenge.

Graphic: When prompted to generate photos of interracial interactions, the Meta AI refuses to cooperate.

A Meta AI resource was released last year that permitted people to fill in textual content prompts and make visuals to match the requests place forth by them. It was not the initial AI imaging tool—Meta’s existing Emu tool presented the know-how for the new standalone tool—but it came out at a time when AI imaging was at its peak. It also emerged in the nevertheless-growing dust of its other controversy, with customers misusing its AI sticker graphic generator. People today insisted on creating the most foul content material with the provider and it caused quite a stir when men and women understood how a lot freedom it permitted them. Meta did what it could to limit the use of some offensive keywords but individuals had been brief to come across methods to do the job all-around it in their research for mischief.

The sticker gave buyers additional freedom to be resourceful and make references to true personalities these as Ted Cruz or Mark Zuckerberg, which was a massive section of the difficulty. Nevertheless, the “sticker-like” unrealistic structure of the photos did carry down the intensity of the injury the instrument could do. Regretably, Hyperallergic described that matters of racial bias were being rampant in the stickers you could deliver then also.

Troubles with Meta AI Image Generator—The Saga Continues

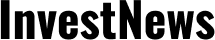

On the tailcoats of the former Meta AI device controversy, the more recent imaging software has now been discovered to wrestle with the idea of interracial couples and diversity. When The Verge experimented with to produce an impression of an “Asian male and Caucasian friend” or even an “Asian person and white lady smiling with a pet,” the impression generator repeatedly presented images of people today who were quite pointedly just Asians. Even when questioned to clearly show an “Asian lady with a Black close friend,” the site reportedly confirmed them what Asian gals seemed like as a substitute, despite the fact that some variations in the prompt lastly offered some valid benefits.

The report also suggested that not only did they find challenges with Meta AI’s range, but the AI also appeared to have held selected racial stereotypes, portraying Asian men as distinctly more mature than women of all ages, and making use of “Asian” to necessarily mean a incredibly clearly “East Asian” female relatively than the contemplating the entirety of Asia. The Meta AI image generator problem listing also included the inclination to showcase standard attire in the illustrations or photos, even when it was unprompted.

Resources like EndGadget and CNN also done their have investigations into the Meta AI impression generator difficulties. They had been in a position to confirm that the issues with Meta’s AI graphic generator ended up indeed correct.

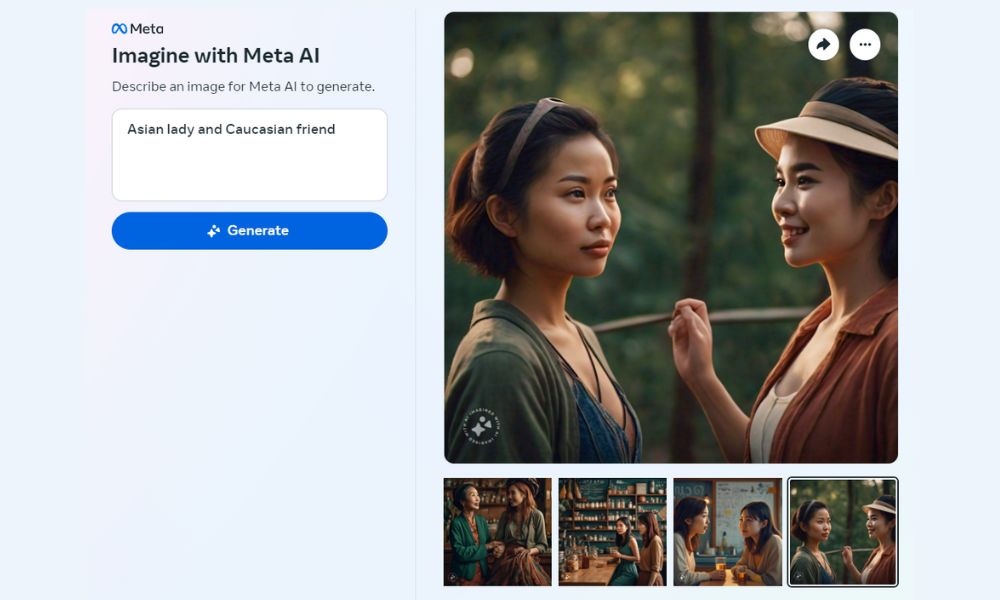

Photos: At times it will get it, at times it doesn’t. Prompt on the still left: “A Caucasian person with his Asian spouse”. Prompt on the ideal: “A mixed-race few with their little one”

Knowledge the Challenges with Meta AI’s Diversity Problem

We appeared at the Imagine With Meta AI device ourselves and can see why the problems have lifted some worry between people. When requested to crank out an picture of an “Asian Person with a Caucasian wife,” the AI was unable to create any pictures at all. Even when prompted to make an picture of “an interracial few,” the resource was unable to create everything. This has brought about some issue over Meta getting blocked specific conditions totally to avert other people from screening these problems, but this isn’t a little something we can validate without having confirmation from them.

When requested to make an picture of an “Asian lady and Caucasian close friend,” the AI was only at any time in a position to create photographs of two Asian women of all ages in distinct options. The Meta AI software controversy is accurate in suggesting that some key phrases perform superior than others—you could possibly not locate what you are searching for when you ask for a Black man or woman, but browsing for African American does give you the ideal results. Likewise, specifying “East Asian” and “South Asian” offers you an impression of an interracial couple, even if that does quickly set an emphasis on the cultural garb. This is not a foolproof remedy either and there are quite a few scenarios where the AI will make a faux pas.

Prompt on the remaining: “An East Asian with his South Asian wife” Prompt on the suitable: “A South Asian Male with his East Asian Spouse”

Where Do We Go From Below?

The challenge with Meta AI’s diversity difficulties is that it is hard to notify regardless of whether there is an implicit bias producing the misstep or the AI is just pulling from semantic facts that leans a lot more intensely to one side. The tool’s wrestle with mistaking “Asian” to generally imply “East Asian” may be simply because that looks to be the normal craze of how the word is utilized. Does that make it Meta’s fault or is that the context we have asked it to replicate? The Meta AI (and its group) are possibly even now at fault for allowing the bias to perpetuate, but there is place to discover before we write off Meta AI’s struggles with interracial partners as a misplaced trigger.

There has been no reaction to the Meta AI resource controversy from the business. Meta could possibly have to change how the AI tool bargains with prompts or rework the content material of their coaching information in order to address the problem forever. The challenges with the Meta AI impression generator may well get some time to totally resolve but this isn’t the conclude to the complications we will see emerging with AI.

The article Meta AI Instrument Controversy Shines Light On Doable Schooling Bias appeared initially on Technowize.